Task

Multimodal speaker classification systems typically assume the availability of all modalities during inference. However, in real-world scenarios such as multimedia retrieval, surveillance, and teleconferencing, one or more modalities may be unavailable due to occlusion, sensor failure, or data corruption. Additionally, most existing systems are trained and tested on the same language, limiting their applicability in multilingual environments.

This challenge aims to push the boundaries of:

- Missing modality learning

- Multimodal robustness

- Cross-lingual speaker classification

The goal of the POLYSIM 2026 challenge is to identify speakers across multiple languages when either the face or voice modality is missing. Participants must develop models that can handle three scenarios: (1) voice-only identification when facial data is unavailable, (2) face-only identification when vocal data is unavailable, and (3) multimodal identification when both modalities are available. The challenge focuses on maintaining robust speaker identification performance across different languages (polyglot) even when one modality is completely absent, reflecting real-world constraints where complete multimodal data is not always available.

The task is closed-set speaker classification using:

- Audio (voice)

- Visual (face)

Participants must design a single unified model that can handle different testing conditions without retraining.

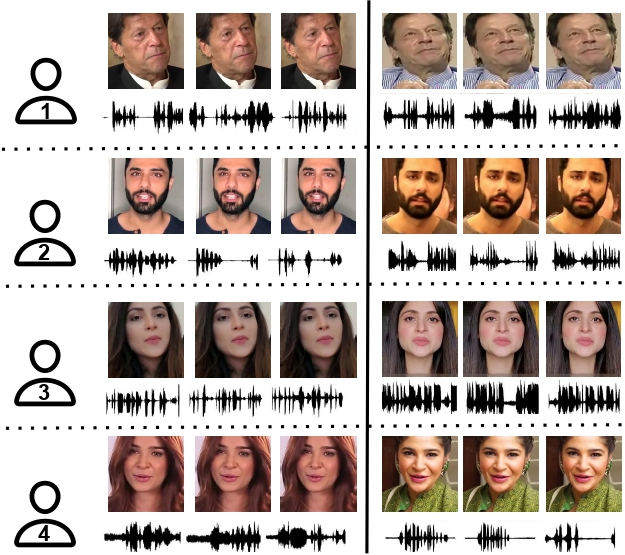

Figure 1: The POLYSIM 2026 Challenge focuses on speaker identification across multiple languages with missing modalities (either face or voice may be absent).

The challenge includes four task settings, covering multimodal, missing-modality, and cross-lingual scenarios.

P3. In-Language Multimodal

- Training: Audio + Face modalities

- Testing: Audio + Face modalities

- Language: Same language in training and testing

This is the standard multimodal setting where both modalities are fully available and no language shift is present.

P4. Missing-Modality (Audio-Only)

- Training: Audio + Face modalities

- Testing: Audio modality only

- Language: Same language in training and testing

The face modality is completely missing at test time. Models must perform speaker classification using audio only, without retraining.

P5. Cross-Lingual Multimodal

- Training: Audio + Face modalities

- Testing: Audio + Face modalities

- Language: Different languages for training and testing

This setting evaluates the ability of models to generalize across languages when both modalities are available.

P6. Cross-Lingual Missing-Modality

- Training: Audio + Face modalities

- Testing: Audio modality only

- Language: Different languages for training and testing

This is the most challenging scenario, combining:

- Cross-lingual testing

- Missing face modality during inference

| Setting | Training Modalities | Testing Modalities | Language |

|---|---|---|---|

| P3 | Audio + Face | Audio + Face | Same |

| P4 | Audio + Face | Audio only | Same |

| P5 | Audio + Face | Audio + Face | Cross-lingual |

| P6 | Audio + Face | Audio only | Cross-lingual |

Evaluation protocol

Performance will be evaluated using:

- Top-1 Accuracy

For more information please see the evaluation plan.

- Scores are computed separately for P3, P4, P5, and P6

- Final ranking is based on the average performance across all settings